Image Generated Using Nano Banana

Silicon Data Is No Longer A By Product

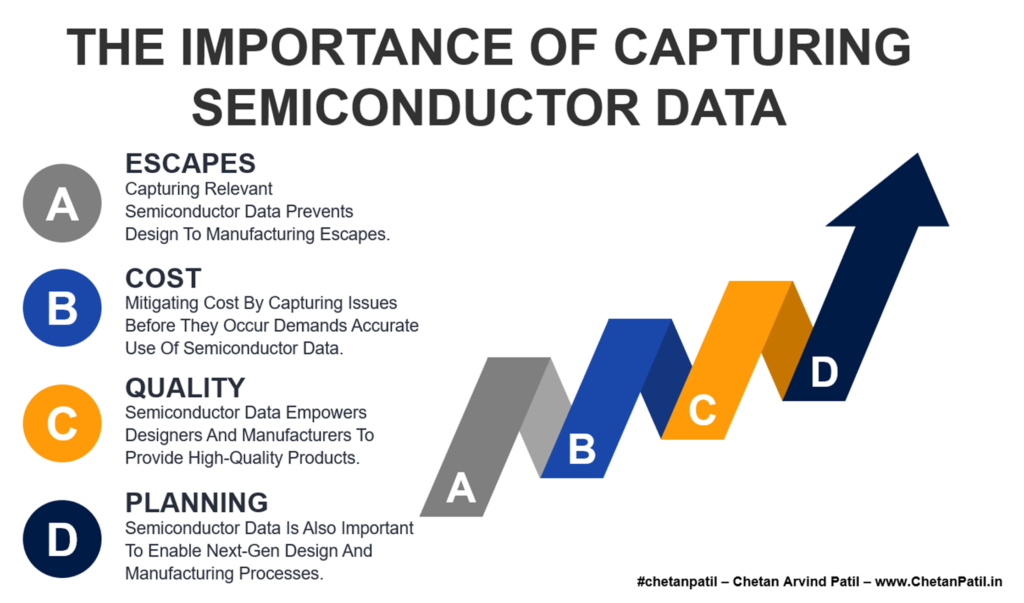

In the modern semiconductor industry, data no longer emerges passively from manufacturing. It has become a primary output of the entire silicon development process. As technology nodes shrink, architectures grow more complex, and packaging shifts toward heterogeneous integration, every stage of the product lifecycle relies on high-fidelity data to guide decisions. From early silicon bring-up to high-volume production, data now dictates yield learning, reliability confidence, and time-to-market.

What distinguishes semiconductor data from data in most other industries is the cost of generating it. Each meaningful data point is inseparably tied to physical silicon, advanced equipment, and tightly controlled manufacturing and test environments. Unlike digital or software ecosystems, semiconductor data cannot be created or scaled without first committing substantial capital to fabs, process tools, and test infrastructure.

This shift has quietly transformed silicon data from a technical necessity into a material cost driver. Every wafer processed and every test executed exists not only to enable product shipment, but also to generate data that validates design assumptions, manufacturing readiness, and quality margins.

Thus, in today’s semiconductor economics, data no longer comes as a byproduct of silicon. It is one of the most expensive outputs of the silicon lifecycle itself.

Hidden Cost Of The Semiconductor Data Lifecycle

Every semiconductor data point follows a structured lifecycle that quietly accumulates cost long before it delivers insight. Data generation begins with physical silicon, qualified test programs, and production-ready environments. On top of that, wafers must be fabricated, handled, and tested using specialized equipment, while test programs are developed and maintained to yield meaningful results. Each of these steps consumes capital, time, and highly constrained resources.

Once data is generated, it cannot be used in its raw form. It must be collected, cleaned, conditioned, and profiled before engineers can trust it for decision-making. This requires dedicated data flows, software infrastructure, and compute resources capable of handling large volumes of test and process data. Traceability adds another layer of complexity. Data must be mapped back to its originating wafer, lot, and die so that results remain actionable across manufacturing, yield analysis, and failure investigation.

By the time silicon data is ready for engineering analysis, a significant portion of its total cost has already been incurred. These costs are often invisible because they are spread across fabrication, test, data infrastructure, and process engineering teams. As a result, the semiconductor data lifecycle becomes an embedded cost structure, one that grows with every new node, package, and product generation.

Analytics And Infrastructure Turn Data Into Ongoing Spend

The cost of semiconductor data does not peak when data is captured. It accelerates once analysis begins. Extracting value from silicon data requires advanced analytics platforms, visualization layers, and continuous monitoring systems that operate across development and production.

These capabilities are essential for yield learning, root cause isolation, and correlation with historical data, but they also introduce recurring operational costs.

Data volumes continue to grow with higher pin counts, multi-die packages, and system-level test coverage. This forces semiconductor teams to invest in scalable compute, storage, and networking infrastructure.

| Data Capability | Why It Is Required | Cost Impact |

|---|---|---|

| Advanced Analytics | Enables yield learning, anomaly detection, and correlation across data sets | High recurring software and compute cost |

| Visualization | Accelerates decision making and cross team alignment | Dedicated tools and internal development effort |

| Real Time Monitoring | Detects process drift and equipment issues early | Always on infrastructure and integration overhead |

| Historical Data Correlation | Connects new failures to past silicon behavior | Long term storage and data retrieval cost |

| Scalable Infrastructure | Supports growing data volume and complexity | Continuous investment in compute and storage |

At the same time, analytics tools evolve rapidly, driving recurring licensing costs and continuous skill upgrades for engineering teams. What was once a one-time investment has become a permanent operating expense.

As semiconductor data moves from isolated analysis to always available intelligence, analytics, and infrastructure become permanent cost drivers. The challenge is no longer just generating data, but sustaining the systems needed to extract value from it continuously.

Why Semiconductor Data Has Become A Structural Cost

The cost of semiconductor data is not a temporary phase tied to a single technology node or product cycle. It is structural. As devices scale, architectures diversify, and packaging complexity increases, the resources required to generate, analyze, and retain silicon data expand in parallel.

Each new node, material, or integration approach introduces additional variables that must be measured, validated, and monitored through real silicon data.

Long-term data retention further reinforces this cost structure. Semiconductor data cannot be discarded once a product ships. Future designs often depend on historical data for reference, comparison, and risk reduction. This forces sustained investment in storage systems, data governance, and retrieval workflows that span multiple product generations. The value of this data grows over time, but so does the cost of maintaining it.

As a result, semiconductor companies are increasingly required to think of data as an engineered system rather than an operational byproduct. Managing cost now depends on how efficiently the data lifecycle is designed, integrated, and scaled alongside silicon development.

In this reality, semiconductor data is no longer just a technical asset. It is a long-term economic commitment embedded in the industry’s fabric.